In an increasingly fast-paced, more hectic world, it seems that upgrading products is essential to work, indeed indispensable and if you stay behind it becomes a problem…

In an increasingly fast-paced, more hectic world, it seems that upgrading products is essential to work, indeed indispensable and if you stay behind it becomes a problem…

there are hardware manufacturers such as in the case of mobile that even force the upgrade to new firmware and SO by automatically downloading them on devices, taking up space, creating false system errors at the exit of the new os to "unintentionally" cause the need for reset to update the system, and often preventing it from going back, or bringing the user to need to be a super geek to go back. I personally witness the problems caused by two leading companies in the sector, that by resetting the devices to the previous system by hacker methods (because for one of the two there is no way to downgrade, indeed it is blocked by the company's server), you restore functionality perfectly, and to clean device, if you do the upgrade you block or slow down or make the product useless.

Now if you are here is to talk about cameras or cameras, already in the past I have dabbled in the hacking of the firmware of panasonic thanks to the tool made by the Russian hacker Vitality, both using presets already made including the famous Flowmotion, or creating variants on my own, to optimize the rendering of the panasonic GH2, excellent vdslr that with hacking was able to compete with cameras much higher.

Today we see that not always an upgrade equals an advantage. I have long switched to digital cameras, in particular Blackmagic Design, I have evised pros and cons in other articles, and I have expressed my thoughts, then depending on the needs and tastes may like it or not, for my needs are close to perfection, and for the cost that they far exceed the results.

Taken a year and a half ago, when there was still a very basic firmware, slowly with realase increasingly sophisticated firmware the machine has become a reliable and quality production product.

Recently having needed low light recovery, I noticed a defect that I had not noticed in the past, because there was no…

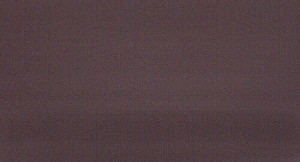

having filmed her granddaughter in the dark, or rather illuminated only by the light of the kitchen TV, so almost in the dark, I noticed a black halo on the left side very dense, and on top of a similar thing, but less intense.

Immediately I became restless, I did some tests at different levels of light, and the problem arose in the absence of light, where pumping in the post the signal (of 5 stop) of an image taken with the cap, was highlighted as per jpeg.

Sure it wasn't before, I started doing some research and I met a post right on the BMD site, related to this problem with the new firmware…

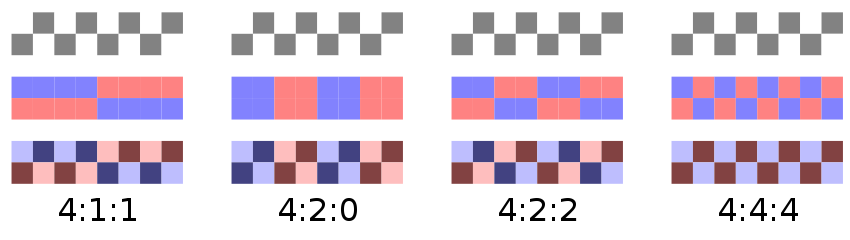

after doing some experiment, with a lot of patience (changing the firmware on the camera takes about 15 minutes each time), I found that depending on the firmware the result in low light was different.

Version 1.9.5 s hows 5stop – a slight form of banding, absent in the presence of light signal. More than natural on such an image.

hows 5stop – a slight form of banding, absent in the presence of light signal. More than natural on such an image.

to a version 2.0.1 features a slightly more pronounced banding

to a version 2.0.1 features a slightly more pronounced banding

version 2.4 that introduces theoretical machine optimizations (I would be inclined to think otherwise) and only adds guides for the 1.84 and 2.40 formats to the screen, which I can use those of the external monitor, or the dear old acetate sheet with the marks on the control monitor, I can avoid at the foot.

version 2.4 that introduces theoretical machine optimizations (I would be inclined to think otherwise) and only adds guides for the 1.84 and 2.40 formats to the screen, which I can use those of the external monitor, or the dear old acetate sheet with the marks on the control monitor, I can avoid at the foot.

so I conclude that to date, being always up-to-date is not good at any cost, and that you have to know how to look back, because what I would immediately trace back to a hardware defect, is actually related to the software of the machine.

Now I already imagine that the detractors of the BMC are already ready with comments of outrage, but I stop them informing them that the first Alexa I tried, machine that costs 20 (twenty) times the bmpc4k on release was in the following conditions :

- the audio was not recorded in the room because the firmware did not consider the xlr of the camera

- registered in prores, three types, internally only the fullHD format, and the 2k recording was only available from external recorder that would arrive 6 months later

- every 3-4 recordings one skipped, without giving notice, so you always had to look at the shootings otherwise you risked having a lack of shooting on the scene

- the amount of noise from the first release was monstrous, I remember the first footage and they looked like dslr.

- it has the brand that has been a cinematic guarantee for decades, so people demanded, but they didn't complain about it on the forums, because with that machine they worked on it… From the other, the film cameras jammed, scratched the footage, etc.

so this post not to complain about the problem, of which BMD has been informed with great detail, but to provide a solution to those who have to solve on the fly, that is to go back to firmware 1.9.5

I want to remember that in life those who complain in the wind, waste time

those who are engineered and evolve, go ahead and solve 😀